In the rapidly evolving digital landscape, ai transformation is a problem of governance. Organizations and governments alike are racing to integrate artificial intelligence into core operations, decision-making processes, and public services. Yet, the absence of robust oversight structures often leads to fragmented implementation, ethical dilemmas, and unintended societal consequences. This article explores the multifaceted reasons why governance has become the central bottleneck in harnessing AI’s full potential responsibly.

Understanding AI Transformation: Beyond Technology Hype

AI transformation refers to the systematic integration of artificial intelligence technologies—such as machine learning, natural language processing, and predictive analytics—into business models, government operations, and everyday workflows. It promises enhanced efficiency, data-driven insights, and innovative solutions to complex problems. However, this shift is not merely technical; it reshapes power dynamics, accountability, and human oversight.

Many leaders view AI as a tool for competitive advantage or operational streamlining. Yet, without clear governance, AI adoption can amplify existing inequalities or create new vulnerabilities. For instance, automated decision systems in hiring or resource allocation may inadvertently perpetuate biases if not properly monitored. This is where governance enters the picture: it provides the frameworks for ethical deployment, risk management, and stakeholder alignment.

Related to broader digital transformation efforts, AI requires more than investment in tools—it demands structured policies that align innovation with public interest and organizational values. Without these, even the most advanced AI systems risk becoming liabilities rather than assets.

The Governance Gap: Why AI Outpaces Oversight

One core reason ai transformation is a problem of governance twitter lies in the stark mismatch between technological speed and institutional response times. AI models evolve weekly, with new capabilities emerging from global research labs and open-source communities. In contrast, policy-making and regulatory processes operate on months or years.

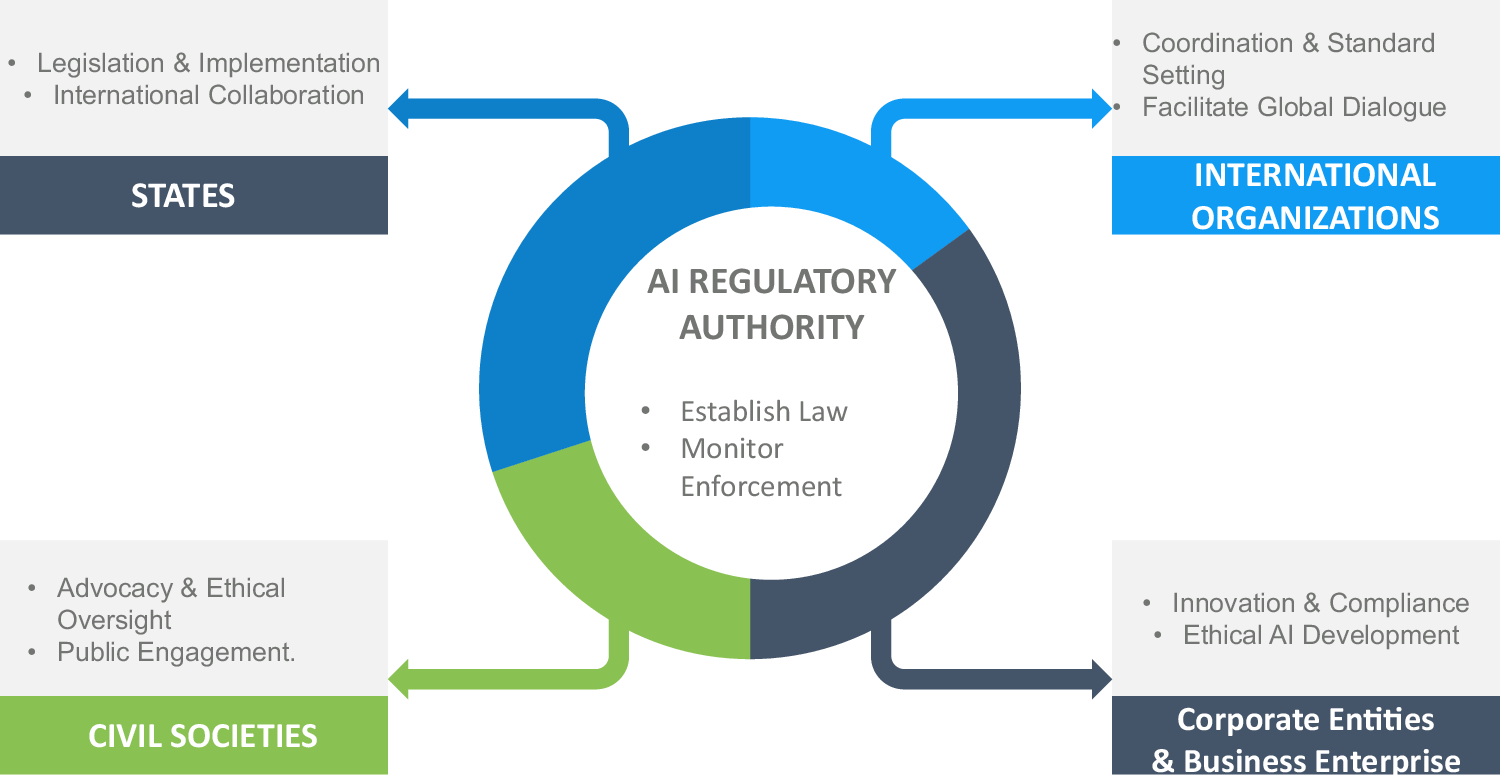

Governments worldwide have introduced frameworks like the European Union’s AI Act, which categorizes AI applications by risk levels. However, enforcement remains uneven across borders. In the United States, recent executive actions emphasize innovation while states experiment with their own rules, creating a patchwork that complicates compliance for multinational entities.

This gap manifests in several ways:

- Lack of accountability structures: Who owns AI outcomes when systems err? Boards and executives often delegate to technical teams without clear reporting lines.

- Inadequate risk assessment protocols: Many organizations deploy AI without comprehensive audits for bias, privacy, or security.

- Fragmented data governance: AI relies on vast datasets, yet few entities maintain unified standards for data quality and ethical sourcing.

Business leaders frequently cite these issues in surveys, noting that governance failures—not technological shortcomings—derail scaling efforts.

Ethical Challenges and Bias in AI Systems

Ethical considerations form another pillar of the governance problem. AI systems learn from historical data, which may embed societal prejudices. Without proactive oversight, tools used in healthcare diagnostics, loan approvals (reframed here as resource allocation decisions), or educational assessments can disadvantage certain groups.

Governance addresses this through mandatory fairness audits, diverse development teams, and transparent algorithms. Yet, many firms treat ethics as an afterthought rather than a foundational element. International bodies like the OECD advocate for human-centered AI principles, emphasizing transparency and inclusivity.

Consider real-world implications: An AI-powered recruitment tool might favor candidates from specific educational backgrounds due to training data imbalances. Effective governance requires ongoing monitoring, explainability tools, and redress mechanisms for affected individuals. This is not optional compliance—it is essential for maintaining trust and legitimacy.

For more on building ethical frameworks in business operations, see our guide on digital transformation strategies.

Data Privacy, Security, and Surveillance Risks

AI transformation amplifies data-related concerns. Systems process enormous volumes of personal and operational information, raising questions about consent, storage, and misuse. Governance frameworks must enforce strict privacy protocols, such as anonymization techniques and access controls.

In the absence of strong policies, “shadow AI”—unauthorized tools adopted by employees—proliferates, bypassing official safeguards. Reports highlight this as a growing 2026 challenge, with internal misuse outpacing external threats.

Security vulnerabilities add another layer. Adversarial attacks can manipulate AI outputs, while weak data governance heightens breach risks. Robust governance integrates cybersecurity into AI lifecycles from design to deployment, aligning with standards like ISO frameworks for AI management.

Economic and Workforce Implications

AI promises productivity gains but also disrupts labor markets. Automation may shift roles toward higher-skill positions, necessitating reskilling programs. Governance ensures equitable transitions through workforce planning, ethical automation policies, and social safety nets.

Without coordinated efforts, regions or demographics may lag, widening economic divides. Policymakers must collaborate with industry to forecast impacts and invest in education. This holistic approach turns potential disruption into shared prosperity.

Insights from global discussions on these dynamics can be explored further on Wikipedia’s page on the Ethics of Artificial Intelligence.

National Security and Geopolitical Dimensions

On a macro scale, ai transformation is a problem of governance x.com intersects with national security. Advanced systems influence defense, intelligence, and critical infrastructure. Governance here involves export controls, international treaties, and safeguards against misuse by state or non-state actors.

Geopolitical competition—evident in global summits—underscores the need for cooperative standards rather than isolated policies.

Fragmented approaches risk an “AI arms race,” where speed trumps safety. Effective governance fosters alliances, shared best practices, and mutual verification mechanisms.

Accountability, Transparency, and the “Black Box” Problem

Many AI models operate as opaque “black boxes,” making decisions difficult to audit. Governance demands explainable AI techniques, documentation of decision logic, and human-in-the-loop oversight for high-stakes applications.

Boards increasingly recognize this imperative, with calls for AI literacy among directors. Without it, liability questions remain unresolved: Is the developer, deployer, or user responsible for harms?

Global Coordination Challenges

AI knows no borders, yet governance often remains national. Differing cultural, legal, and economic priorities hinder unified action. Initiatives like the UN’s AI advisory efforts aim to bridge this, but progress is incremental.

Businesses operating internationally face compliance headaches from varying rules. Harmonized standards—perhaps through industry consortia—could alleviate this while promoting innovation.

Explore related business perspectives in insights on modern technology adoption.

Case Studies: Lessons from Early Adopters

Enterprises that succeeded with AI did so by embedding governance early. They established cross-functional committees, defined KPIs for ethical performance, and conducted regular impact assessments. Failures, conversely, stemmed from siloed initiatives and reactive fixes.

Public sector examples, such as AI in public service delivery, show similar patterns: Success correlates with clear mandates and citizen engagement.

Pathways Forward: Building Effective AI Governance

Addressing these issues requires:

- Leadership commitment: Boards must prioritize AI oversight in strategy sessions.

- Integrated frameworks: Combine technical, ethical, and legal elements.

- Stakeholder collaboration: Involve employees, communities, and regulators.

- Continuous adaptation: Governance must evolve with technology.

Tools like automated compliance platforms and AI impact assessments can support this. Ultimately, governance transforms AI from a potential risk into a force for sustainable growth.

The Human Element: Cultivating Responsible AI Cultures

Technology alone solves nothing; people drive change. Governance succeeds when it fosters cultures of curiosity, accountability, and ethical reflection. Training programs, clear policies, and incentive structures reinforce these values.

Discover practical steps in our coverage of business innovation and skill development.

Conclusion: Governance as the Foundation for AI Success

ai transformation is a problem of governance x com holds immense promise, but its realization hinges on governance. By closing the oversight gap, prioritizing ethics, and fostering global cooperation, we can navigate challenges and unlock benefits for all. The question is not whether to adopt AI—but how to govern it wisely.

This comprehensive approach ensures AI serves humanity’s broader goals, aligning innovation with responsibility and shared prosperity. Organizations and governments that invest in governance today will lead tomorrow’s AI-driven world.