What is a Tensor Processing Unit?

A Tensor Processing Unit (TPU) is a custom chip (ASIC) made by Google. It speeds up machine learning tasks, especially those using tensors — multi-dimensional data arrays common in neural networks. TPUs excel at fast matrix multiplications for AI training and inference.

Unlike general-purpose chips, TPUs focus only on AI workloads, delivering high performance with low power use.

TPU History in Brief

- 2015: Google deploys first TPU (v1) internally for inference — 15 months from idea to data centers.

- 2016: Public reveal; shows 15–30× better performance than CPUs/GPUs for some tasks.

- 2017: TPU v2 adds training support.

- 2018: Available on Google Cloud for everyone.

- Now (2026): Up to 7 generations (e.g., Ironwood for inference era); powers Gemini models and large-scale AI.

Google built TPUs to handle exploding neural network demands efficiently — cheaper and greener than scaling GPUs.

How a TPU Works (Simple Steps)

- Takes tensor data (weights, activations).

- Loads into high-speed on-chip memory.

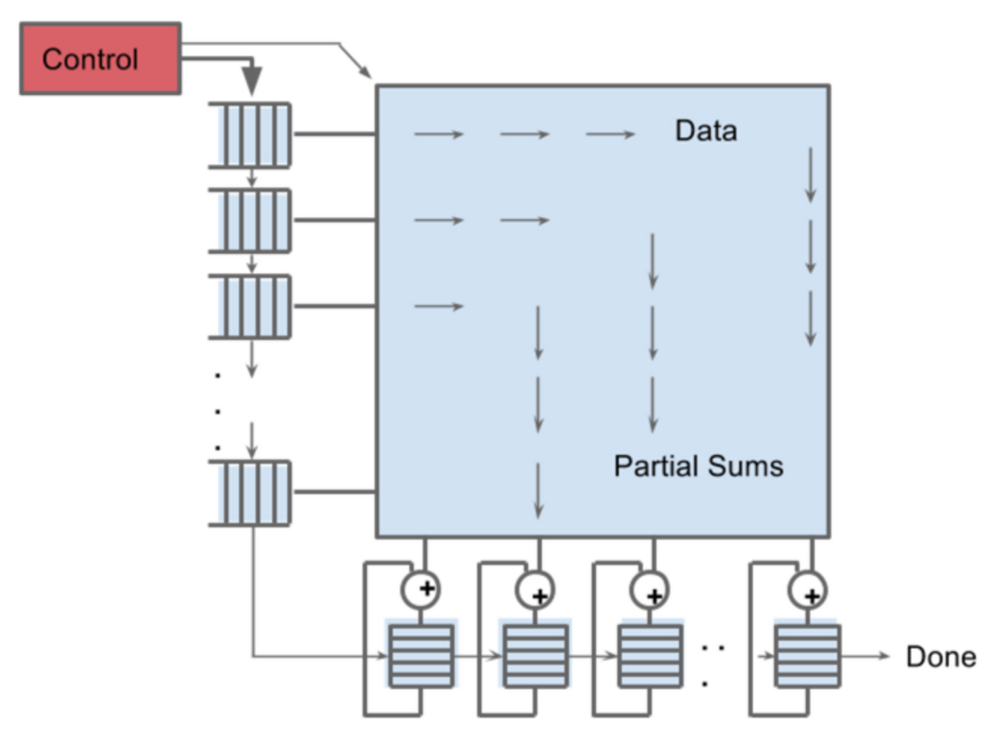

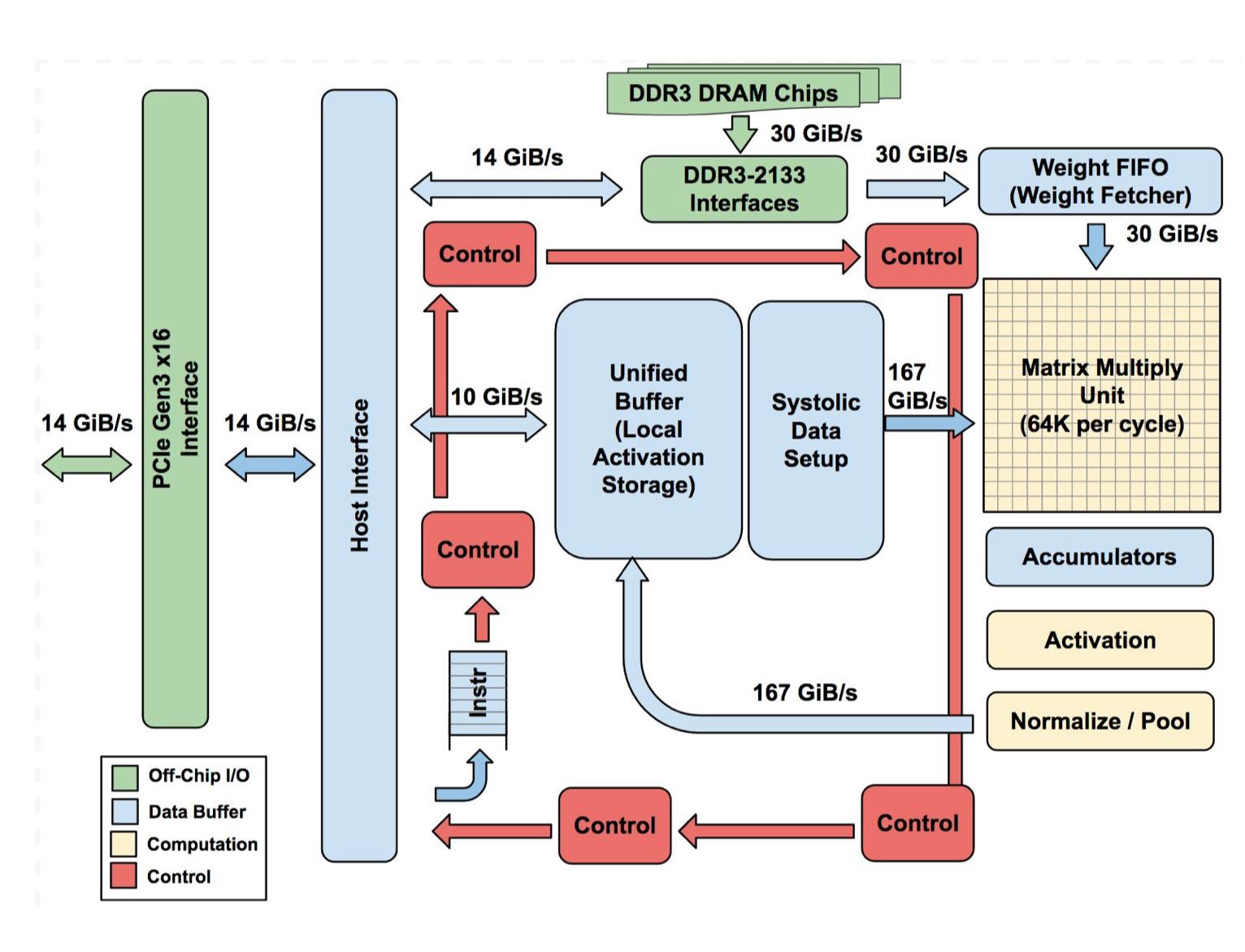

- Uses a systolic array — grid of multiply-accumulate units (e.g., 256×256 in early models).

- Performs massive parallel matrix math (core of deep learning).

- Outputs results quickly with minimal data movement.

The systolic design pipes data like a conveyor belt — efficient, low energy, high throughput for low-precision math (e.g., 8-bit).

Key TPU Parts

- Systolic Array / Matrix Multiply Unit (MXU): Thousands of simple ALUs for parallel multiply-add.

- High-bandwidth memory (HBM): Fast on-chip storage for large models.

- Interconnects: Links multiple TPUs in pods/slices for huge scale.

- XLA Compiler: Optimizes code for TPU hardware.

TPU Types & Access

- Cloud TPUs: Rent via Google Cloud (v5, v6, etc.) — pods with thousands of chips.

- Edge TPUs: Tiny versions for devices (e.g., Coral board). Mainly cloud-based; supports TensorFlow, JAX, PyTorch.

TPU vs GPU vs CPU – Quick Comparison

- CPU: Versatile, few strong cores → general tasks, sequential work.

- GPU: Thousands of cores → parallel graphics/AI, flexible for many models.

- TPU: Specialized systolic arrays → ultra-efficient for tensor ops, best performance-per-watt/dollar on compatible workloads (often 30–80× better efficiency).

Example: CPU plans the meal, GPU chops many veggies at once, TPU runs an optimized factory line for one recipe super fast.

TPUs win on cost and power for massive Google-scale AI; GPUs lead in flexibility and broad adoption.

Where TPUs Are Used Today

- Training huge models (e.g., Gemini, PaLM).

- Fast inference for Search, Photos, Translate.

- AI research on Google Colab/Kaggle.

- Large-scale cloud workloads.

Challenges Less flexible than GPUs (best with optimized code); mainly Google ecosystem; not for graphics.

Future of TPUs More generations focus on inference efficiency, lower power, bigger pods. They power the “age of inference” with custom silicon like Ironwood.

FAQs TPU vs GPU? TPU = specialized efficiency for tensors; GPU = versatile parallel power. Can anyone use TPUs? Yes — via Google Cloud or free tiers in Colab. Best for? Large-scale AI training/inference where cost & energy matter most.

Conclusion A Tensor Processing Unit is Google’s powerhouse for AI — built for tensor math to train and run neural networks faster and cheaper. From 2015 inference chips to 2026 superpods, TPUs changed how massive AI works.

What AI task would you run on a TPU? Share below!

References

- Wikipedia – Tensor Processing Unit

- Google Cloud – Introduction to Cloud TPU

- Google Cloud Blog – In-depth look at Google’s first TPU

- ByteByteGo – How Google’s Tensor Processing Unit Works These sources give clear, up-to-date facts for beginners and AI enthusiasts.